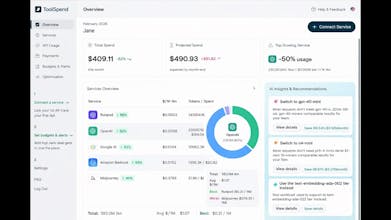

Stop losing money on forgotten SaaS subscriptions and "ghost" licenses. Toolspend is the ultimate command center for your stack, designed to give you 100% spend visibility without the manual upkeep. While other tools just list your apps, Toolspend deep-dives into your actual usage and spend patterns. We identify underutilized seats, detect duplicate tools across teams, and alert you before every renewal. Toolspend helps you automate the toil of procurement so you can focus on building!

Toolspend

Hey Product Hunt

AI tools are exploding inside companies.

What isn’t exploding? Visibility into what they actually cost.

Teams are subscribing to ChatGPT, Claude, Midjourney, Cursor, Perplexity, ElevenLabs… and finance only finds out when the bill hits.

The real problem?

AI usage (tokens) and actual spend are completely disconnected.

That’s why we built ToolSpend.

It connects your AI services + banking data and shows:

• What you're really spending

• Which teams are driving usage

• Where you’re overpaying

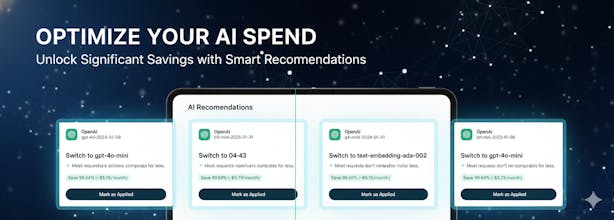

• Smarter model alternatives

AI shouldn’t be the next AWS surprise bill.

Excited to hear your feedback — especially from founders & dev teams already scaling with AI

@visagar This is such a smart niche to go after! 👏 I (well, the whole team) use a lot of AI tools daily, and tracking what we’re actually spending manually… it gets messy fast. Love the idea!

I’m curious – how granular can the team-level visibility get? Can you attribute usage down to specific projects or cost centers, or is it mainly per tool / per team right now?

Toolspend

@tereza_hurtova Love this question and honestly… I’ll give you the real answer.

Right now, it’s strong at:

Per tool visibility (OpenAI, Anthropic, etc.)

Per API key / account

Team-level rollups

Token + cost breakdown where the provider allows it

But project-level / cost-center attribution?

That’s where we want to go — and we’re figuring that out with users.

Truthfully, we didn’t want to overbuild assumptions about how teams structure AI spend. Some companies use separate API keys per project. Others share keys across everything. Some think in “cost centers,” others think in “clients,” others just want “who ran this model and why did it spike?”

So instead of guessing the perfect structure upfront, we’re starting with clear usage visibility and letting real usage patterns guide the next layer.

What we’re already seeing:

Even one provider (like OpenAI) behaves like 5–10 different cost centers depending on models, environments, and use cases. That’s the real chaos we’re trying to bring clarity to.

So short honest answer:

Granular at tool + key + team level today.

Project-level attribution is something we’re actively shaping based on feedback like yours.

If you had your ideal setup — would you want spend broken down by client, by internal product, or by something else entirely?

@visagar Appreciate the transparent answer! That approach makes a lot of sense to me. We’re building our product too, and I relate to that tension between the long-term vision and what’s realistic today. There’s always the “ideal structure” in your head… and then there’s the messy reality you discover through users. 😀

Starting with clear visibility and letting real usage patterns shape the next layer feels like the right call.

If I imagine an ideal setup, I’d probably want flexibility – the ability to view spend by client and by internal product/project.

In our case, cost centers shift depending on whether we’re experimenting, building core features, or running infra. So being able to re-group dynamically would be powerful.

And you’re right – even one provider can behave like multiple cost centers. That’s exactly where the chaos starts.

Excited to see how you evolve the attribution layer! 🙂

Product Hunt Wrapped 2025

Yep, rogue AI seats are killing us. Surprise Midjourney/Cursor/Claude charges and then finance pings me. If this actually maps tokens to teams + nudges before renewals, that’d help. Does it catch stuff paid on personal cards that get expensed later?

Toolspend

@alexcloudstar Yep — Runway, Midjourney, Cursor, Claude are usually the usual suspects

ToolSpend connects via Plaid, so both company accounts and personal cards (that later get expensed) can be pulled in and don’t stay invisible.

From there we map usage to teams and nudge before renewals so finance doesn’t get surprise bills.

We’re actively adding more AI services over the coming days.

This is super timely with everyone subscribing to 5 different AI tools. :D

Does the tool separate variable costs from fixed seat-based subscriptions?

Toolspend

@valeriia_kuna Yes — that separation is core for us.

We split variable usage (like token-based API costs that can spike) from fixed seat-based subscriptions (which quietly stack up across teams).

Without that distinction, finance just sees one big number — and it’s hard to know what’s actually driving spend.

Congrats on the launch! The gap between token usage and actual financial impact is very real, especially as teams experiment across multiple AI tools. How does ToolSpend attribute usage and cost to specific teams or projects when accounts and API keys are often shared across departments?

Toolspend

@vik_sh great question. This is exactly where AI spend gets blurry.

You’re right: shared API keys and pooled accounts are common, which makes attribution tricky.

Right now ToolSpend works in layers:

• Financial layer (via Plaid): gives us the ground truth of what’s actually being paid and from which account.

• Usage layer: where providers expose token or project metadata, we ingest that and track usage patterns over time.

• Attribution rules: for shared keys, we support tagging conventions (project IDs, sub-keys, env variables) and are building allocation logic (usage %, time-based splits, cost center rules).

The goal isn’t just to show spend — it’s to connect spend → usage → team → outcome.

We’re still refining this based on how real teams operate.

How are you currently handling shared API keys internally — strict key isolation, tagging discipline, or more of a manual reconciliation process?

This hits home. 💸 The 'ghost licenses' problem is real and honestly, it's the same mindset I bring to building tools.

You know what else suffers from the same 'set it and forget it' drain? Risk engines. Companies pay thousands monthly for enterprise risk tools, using maybe 20% of the features, while the other 80% is just bloat they never touch.

Quick question: When you look at your stack, what's the most painful 'ghost spend' you've discovered? And if you could wave a wand what would the ideal tool look like that actually respects your budget and gives you exactly what you need, nothing more?

Toolspend

@noha_elmeselhy The worst ghost spend I see?

AI seats + usage-based billing stacked together.

You pay for 10 seats…

Then tokens quietly rack up in the background.

Unused seats + surprise usage = double ghosting.

If I could wave a wand:

• Clear fixed vs variable costs

• Usage tied to people/projects

• Alerts before renewals

• Show waste + smarter alternatives

No fluff. Just “here’s where you’re leaking money.”

That’s exactly the problem we’re tackling.

Who you gonna call? Ghost-license busters!:D 2026 is officially the year of too many AI subscriptions. Love the model alternative feature. Any plans for a one-click cancel button inside the dashboard?

Toolspend

@kostfast Thanks, Kostia — glad you like the model alternative feature.

A one-click cancel action is something we’re considering. Because cancellation flows differ by provider (and aren’t always supported via API), we’re prioritizing a safe “disconnect + stop spend” workflow first, then adding one-click cancel where possible. Which providers would be most useful for you?

@papuna_giorgadze1 OpenAI, Anthropic, and Midjourney for sure. We switch AI tools so fast these days, so a one-click move for the whole stack is exactly what we need. Good luck with the build!