WEIR AI

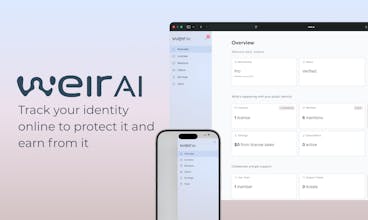

Track your identity online to protect it or earn from it

306 followers

Track your identity online to protect it or earn from it

306 followers

WEIR AI is a privacy-first platform to help you find and protect yourself online. Set your terms, monitor for mentions (including hidden ones), get public identity checkups, and file claims or license on your terms.

WEIR AI

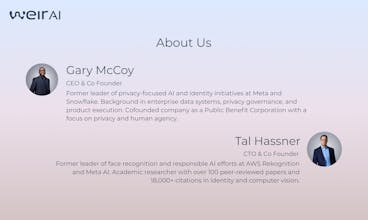

I never expected to get grilled and testify in billion-dollar lawsuits, but there I was. The topics: privacy, consent, biometric data. I’ll spare you the details, but honestly it’s less about those lawsuits and more about the experiences that led up to them.

What I came to understand is this: around the world, your public identity — how you show up online — can impact your life, your freedom, and your finances in ways you’d never expect. And the wave of AI tools has made the cost of manipulating someone’s identity effectively zero.

My co-founder, Tal, and I saw this wave coming years ago and knew we had to do something about it.

WEIR AI is a privacy and consent-centric identity rights platform. We help people track their identity online, to protect it or earn from it.

Building it meant reinventing identity recognition technology from scratch, purpose-built to put people in control without limiting their commercial options.

Nothing off the shelf could do what we needed, so we built it ourselves. We formed WEIR as a Public Benefit Corporation with a mission rooted in the privacy, safety, and security of people and institutions. For us, that was never a question.

We’ve been grinding for a while and documenting some of it on social media.

The good news? Our instincts turned out to be dead on.

The less-than-good news? It’s taken much more effort, resources, and time than we ever planned for.

But we’re here now, and we’re genuinely excited to show you what we’ve been working on

We’ve learned from our design partners and customers about their needs and how we can help. We have a lot more ahead of us, but we’re proud of what we’ve built and how it’s already impacting people’s lives.

Whether you’re the biggest celebrity in the world or a regular Joe, WEIR AI helps you protect, control, and benefit from what’s uniquely yours — your public identity.

@gary_mccoy Congratulations Gary. The product pitch includes earning from your identity, how does that work in practice? Does WEIR AI facilitate licensing deals or just the discovery and consent layer?

WEIR AI

@kimberly_ross Great question Kimberly. In addition to discovery and consent, there is a simple claims process for recovering value if your likeness is already in use commercially somewhere. We are also working on partnerships with existing marketplaces to help people land licensing deals.

@gary_mccoy Hey Gary

What’s the exact moment or event in the Weir AI that makes me think: ‘This is worth paying for’?”

WEIR AI

@andrey_chernyshev1 Its an interesting question. When we first started, we got help from some friends who introduced us to some very high profile people -- influencers, athletes, etc -- and we shared our concept and asked them what they thought. I don't know that we fully internalized what they told us then, but we definitely get it now.

First, most of them don't want to pay for protection because, they felt as if it's the platforms who were creating the problem so the platforms should clean it up.

The second thing they said was, they would consider paying only if it was an investment with some kind of tangible return -- that return could be money or it could be other things.

My team is probably sick of me making the salted caramel analogy -- that salt is pretty good and caramel is pretty good, but put them together and something amazing happens. Protection plus monetization is the salted caramel of public identity rights management.

The rationale for people who are not high profile to pay tend to be associated with past experiences with the consequences of lacking visibility.

@gary_mccoy Yeah I see. This is a real tough point to find an exact spot where the product brings the value. But it should be trackable and measurable.

I asked my product management co-pilot to dig into your market, segmentize, and suppose a specific aha moment. What it says:

hope it could be useful

Do you have an analytics to measure such aha moments in user behavior?

@gary_mccoy @andrey_chernyshev1 If people think that the platforms have some responsibility to clean up this mess, then it's the platforms you may ultimately sell to at the end of the day, which wouldn't be a bad thing, IMO. Find out what they would pay for and why.

BrandingStudio.ai

Tal's framing of the core paradox is what makes this compelling: the technology capable of protecting you has become so legally toxic that the companies who built it won't touch it. Which means the bad actors operate freely while individuals have no recourse. That's a real structural problem, not just a product gap.

The subscription model where you're the customer, not the product, is also the only business model that makes this mission credible long term. Gary's list of safeguards is thorough but that single line does more trust-building work than all the features combined.

As someone who professionally orchestrates AI image models, I find the "earn from it" side of the positioning underexplored and genuinely interesting. How does the licensing flow work in practice when a brand or platform wants to use someone's likeness commercially? Is WEIR handling the transaction layer or just the discovery and consent layer? Congrats on the launch!

WEIR AI

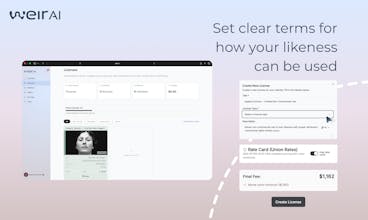

@joao_seabra Thanks for the question Joao. The licensing turned out to be very difficult to figure out -- and I'm sure customers and the market has more to teach us. IP agreements including those involving your name, image and likeness are incredibly complex to compose, understand, and enforce. Our goal was to radically simplify and automate the process and we had several false starts.

How it works now is that we've created an initial set predefined license types (presently 8 described at https://weir.ai/license-types) that encapsulate all of the critical terms and combine them with a very short list of settings (e.g. expiration dates, rates, etc). Some of those we designed like the default "Protect" license which limits use of your likeness to non-commercial purposes or the experimental "Sora Cameo" license which lets you get paid to let people add you to their sora videos. Others like the SAG AFTRA New Media type is designed to reflect the term of that orgs existing membership license.

So, in practice users can pick a license, add a few settings and publish -- either privately or to the public. If its to the public, they can share directly themselves or as we establish the marketplace partnerships, those license can flow their via our APIs.

BrandingStudio.ai

@gary_mccoy This is really thoughtful design. Predefined license types that abstract the legal complexity while still covering edge cases like SAG AFTRA terms is the right approach. The Sora Cameo license in particular is a great example of staying ahead of where the market is going. Looking forward to seeing how the marketplace partnerships develop. 🙌

WEIR AI

@joao_seabra Thanks. I would love to say that we brilliantly saw exactly how this should work in advance, but the truth is that we just got out and talked to a lot of people and paid attention to what they said.

WEIR AI

@joao_seabra I appreciate it. I can't tell you how long we struggled with this, but trying to put a 20 page Docusign in front of people who aren't IP lawyers is a non-starter. I believe that our license types will get smarter and smarter and easier to manage over time.

WEIR AI

Thanks, @joao_seabra . I'll add that the environment is such that there is little incentive (even *negative* incentive) to research and develop new technologies that can at once protect people's privacy AND their rights. That is, it's affecting not just the product development, but the very early stages of AI research into alternative, ethical solutions. So people are left exposed.

Hi @gary_mccoy ,

Saw WEIR AI on Product Hunt — the identity ownership concept is compelling, especially in the AI era.

One thought: the headline feels broad, and “identity” can mean many things. Narrowing it around a specific outcome (e.g., AI-era reputation protection or data ownership monetization) could make the value more immediately tangible.

Curious who you see as the primary user right now.

WEIR AI

@harsh_upadhyay10 Hi Harsh -- You can't see it, but I'm smiling. How to clearly communicate what it is WEIR AI does and for who it is in the market and on PH is quite the challenge.

Tal and I have been working in this space for quite a long time and realized that there really wasn't any existing terminology to refer to it, so, after a bunch of surveys, trial and error and testing in the market we landed on the term "public identity" last year as being the most helpful way of describing it.

To the extent that there was a pre-existing term, it was NIL or "Name, Image and Likeness" but what we heard over and over was that either A) people didn't know what it meant or B) they associated with college sports.

But to get to your main question, the primary users today are creators, athletes, and performers. More generally, it's high profile individuals who depend upon their likeness for their business but most of our marketing materials are directed towards them and the teams that support them (i.e. their human agents, business managers, legal representation, publicists, assistants, etc).

For this audience, what has resonated most with them is combining the concepts of protection and monetization. We found that talking about protection alone or monetization alone was far less effective than combining the two. We are trying very hard to communicate in ways that audience understands and finds compelling.

WEIR AI

@harsh_upadhyay10 Sorry if I'm repeating myself -- I thought I replied earlier today, but I don't see my reply here.

We are focusing on high profile individuals including influencers, performers, and athletes today from a marketing perspective -- but if you squint you can see that we have something for people who are not high profile at all and who just want protection. We're a small company and are avoiding boiling the ocean so to speak.

We coined the term "public identity" -- how you show up online -- to distinguish our focus from other forms of "identity" like your drivers license or your cultural heritage etc. We try to use that term consistently but from time-to-time, we use plain ole identity as shorthand.

@gary_mccoy

That’s helpful context — “public identity” as how someone shows up online is a much clearer frame.

For creators and athletes especially, it almost feels like positioning WEIR AI as the control layer for your digital likeness could resonate strongly — protecting it while enabling monetization.

Curious whether you’ve experimented with framing it that way

WEIR AI

Hey Product Hunt.

I'm Tal, co-founder and CTO of WEIR AI.

I've spent twenty years building face recognition systems, first in academia, then leading face recognition development at AWS and Meta. I know how this technology works, and I know what it makes possible, for better and for worse.

Here's the problem: your face shows up in places you never agreed to. Social media posts, ad campaigns, AI-generated content. Sometimes you don't even know it's happening. And right now, there is very little you can do about it.

Why? Because the technology that could help, face recognition, has become so legally toxic that no large company will touch it. Billions in fines and a tightening web of biometric privacy laws mean that the companies with the resources and expertise to protect you have every incentive to stay far away. So the tools that could give you control over your own face simply don't exist. Meanwhile, the bad actors using your likeness without permission don't care about any of that.

We built WEIR AI to close that gap. Our technology was designed from scratch to be privacy-preserving at the algorithmic level, not as a legal patch on top of old systems. That's what makes it possible to do what nobody else will: find where your face appears and put you in control of it.

You decide the rules: take something down, require attribution, or get paid when someone uses your face commercially. Your face, your call.

For creators, public figures, and professionals whose appearance is part of their livelihood, this is not a hypothetical problem. It is happening to them right now, every day.

My co-founder Gary McCoy and I started WEIR AI as a public benefit corporation because we believe the people who built the technology that makes face recognition possible (yes, that includes me) have a responsibility to also build the tools that put control back in people's hands.

We call it Public Identity Management. Try it and let us know what you think.

Tal and the WEIR AI team

@tal_hassner The founder journey is a tumultuous one but you are indeed here now and this is great! Congrats on your launch, I think this is a great product that is so needed. Does this work on only personal identity or can you keep an eye on say… your company as well?

@gary_mccoy this was actually in response to your post about the WEIR journey👆🏽

WEIR AI

Thank you @jacklyn_i all the same. And, yes, it is definitely tumultuous! But hopefully worth it, if we can help protect people's rights.

This is a really important space, especially with how easy it’s becoming to replicate someone’s identity with AI

Curious — how do you handle false positives or misidentification in detection?

Because in something as sensitive as identity tracking, even small inaccuracies could have serious consequences

WEIR AI

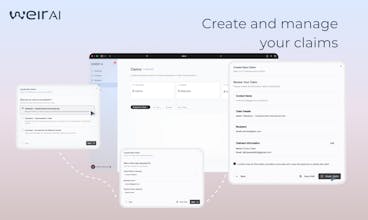

@shrujal_mandawkar1 We handle false positives in a few different ways. First, since we train many of our own AI models from scratch, we prioritize precision over recall so this limits the likelihood of false positives.

Second, while our systems automates much of the matching mentions that we find for you, we still show them to you and let you signal us when something seems off. If you look below, this is a mention I pulled from my account today and you can see a "Not Me" button that you can press to tell us this is a false positive, which we use to improve your results.

(Sorry if I posted this already -- I thought that I'd replied but can't find my reply here).

@gary_mccoy That makes a lot of sense — prioritizing precision + user feedback loop is a solid approach

The “Not Me” signal is especially interesting since it helps personalize detection over time

Curious — are you also thinking about adding confidence scores or explainability around matches?

So users can understand why something was flagged

WEIR AI

@shrujal_mandawkar1 Shrujal -- as you suspect, we do have all of that information and more, but I'm not sure if the audience we are presently targeting will want to see this. We are considering enhancing the UX to make it more clear exactly what was matched. You can think of our methodology more as classification, so we can't, for instance, draw a box around someone's face, because this is not something we compute.

This is a bit longer term goal, but we do want to publish and make data available to researchers and civil society. When I worked at a big company, there were whole teams to help product/eng teams do this. We don't have such a team so it will have to come a little later down the line.

@gary_mccoy That makes sense — especially if the current audience prioritizes simplicity over technical transparency.

But I can see how exposing more detail around the classification logic could become really valuable later, especially for researchers or regulators looking to understand how identity detection systems behave.

We've actually seen a lot of edge cases appear in systems dealing with identity, biometrics, and user-generated signals.

If you ever plan external security or robustness testing as the platform grows, happy to take a look and share feedback — products in this space tend to surface interesting edge cases over time.

Netlify

Hey PH fam 👋

I’m thrilled to bring WEIR AI to the global tech & startup community today. This one is personal for me.

A few months ago, I sat down with Gary, WEIR’s founder, and within minutes I knew this wasn’t just another privacy tool. Gary has lived this problem — testified in billion-dollar lawsuits around privacy and biometric data, watched AI make identity manipulation nearly free, and decided to do something serious about it.

That conversation stuck with me. So here I am. 🙏

Here’s the uncomfortable truth most of us haven’t fully reckoned with:

Your public identity is out there. And you have almost no control over it.

Your name, your face, your likeness — they’re being scraped, copied, and used every single day. AI has made it easier than ever to manipulate who you are online. And the fallout? It can affect your reputation, your freedom, your finances.

We talked a lot about athletes — NBA players, for example — who are increasingly waking up to the fact that their image and likeness is being exploited in ways they never consented to. But this isn’t just a celebrity problem. It’s everyone’s problem.

WEIR AI is the platform that finally puts you in the driver’s seat.

What makes it stand out:

→ 🔍 Monitor mentions of yourself online — including hidden ones

→ 🧾 Get a full public identity checkup

→ 📋 Set YOUR terms for how your identity is used

→ 💰 File claims or license your likeness on your terms

→ 🏛️ Built as a Public Benefit Corporation — their mission is baked into their DNA

Gary and his co-founder Tal built the identity recognition tech from scratch. Nothing off-the-shelf was good enough. That tells you everything about how seriously they take this.

If you care about privacy, consent, or simply owning what’s yours, this is for you.

Check it out and drop your questions, comments or thoughts below ⬇️

Big congrats to Gary, Tal, and the entire WEIR AI team. The world needs what you’re building. 🚀

WEIR AI

@thisiskp_ Thanks so much KP. I remember doing my first product hunt many years ago with a startup that was also dealing with trust -- in that case, a service to help landlords fairly and quickly evaluate housing rental applications. Its been a long time since then and its been but its great to see how the community continues to grow. I looked down the list of other companies who are here today and found one that I may want to partner with. Very cool.

Strong mission Gary. If I sign up today, what does WEIR actually do for me right away?

And if someone uses my photo or identity without permission, how do you help me fix it?

WEIR AI

@vik_sh Hi Viktor

Upon sign up, we immediately create a protect license for your name and start searching for your mentions. When we find them, we alert you, give you our analysis and recommendations for next steps including using our claims service.

If you want deep mention checks where we find you in hidden/untagged scenarios, we first ask you to verify your identity (pretty quick) and ask for your permission to proceed.

There’s more but that’s the basics. Does that help?