Case Study: how Product Hunt can improve AI visibility in 2026

Product Hunt is best known for its homepage, a daily leaderboard of the most creative and innovative products on the internet. Makers go all out to win launch day, because that visibility matters. Product Hunt also plays a significant role in how products appear in Google search results.

What surprised us was that AI assistants like ChatGPT were rarely citing Product Hunt in product recommendations.

AI assistants such as ChatGPT and Gemini rely on reviews, alternative lists, and structured product information gathered across the web. Product Hunt is strong across all three. In theory, this should make Product Hunt a natural source for AI-driven product recommendations and comparisons. In practice, it was not happening.

We set out to understand why LLMs were not citing Product Hunt and whether we could change that. The most recent Orbit Awards provided a clean test case, and a new tool called @Gauge made the impact measurable. Gauge tracks LLM visibility across major AI models using a large, search-informed set of prompts, giving us a statistically meaningful way to measure citation rate.

We focused on AI dictation apps, the first Orbit Awards category, as a controlled test, and aimed to promote AI visibility through a new style of category page. After several targeted iterations, Product Hunt shifted from near zero AI citations to consistent inclusion across multiple models. We are now rolling these changes out across Product Hunt. Product Hunt is becoming part of the AI retrieval layer.

Key Lessons

We view AI visibility as a new distribution layer. Our goal is to ensure that authentic community signal on Product Hunt is systematically surfaced in AI product research workflows.

1. AI visibility is measurable

Track citation rate like SEO. Instrument it, monitor it, iterate.

2. Terminology drives retrieval

If your language does not match dominant queries, you will not be cited. Naming alone can materially change visibility.

3. Authority beats volume

One high-signal, well-structured page can outperform dozens of lower-quality URLs.

4. Model behavior is volatile

Citation patterns shift after model updates. Continuous monitoring is required.

How we're tracking AI Visibility

There are many tools which systematically run prompts against several AI models daily, and measure visibility of a product within prompts. We're using a new tool called @Gauge. We chose because a) their citation tracking is more useful than the alternatives we've considered (in part after they quickly shipped some of our feature requests!) and b) their prompt generation seems to be quite good, allowing us to create representative prompts to track without imbuing any bias.

For the purpose of this post, we did not alter the prompts generated by Gauge, and monitored visibility with respect to those prompts over time. This is directionally valid, even if it is not a good absolute measurement.

We care about cross-model performance, but we have focused on ChatGPT and Google AI Overview as we believe they are the highest-impact channels.

Wispr Flow and SuperWhisper AI visibility

We'll showcase how Product Hunt now contributes to significant LLM visibility for a well-known product and a promising underdog.

Wispr Flow

@Wispr Flow has very strong AI visibility. But, Wispr Flow's visibility in ChatGPT was cut in half following a ChatGPT update:

The same update appeared to cause ChatGPT to cite our article much more frequently. As a result, we significantly softened the blow to Wispr Flow’s visibility drop:

Wispr is mentioned in 13% of ChatGPT answers that research and compare voice-to-text tools.

Product Hunt pages that mention Wispr are cited in 6.7% of those answers. Wispr Flow is mentioned in ~62% of these citations (not shown), meaning ProductHunt is contributing to around 32% of Wispr Flow’s ChatGPT visibility.

It’s important to point out that we don’t know how much causation there is, ie. what the visibility would look like without these citations.

Superwhisper

@superwhisper is another excellent product that has less AI visibility than Wispr. Once again, Product Hunt plays a critical role in SuperWhisper being visible in LLM search.

For some reason, Google AI Overview seems to prefer mentioning Superwhisper (at a rate of 5.5%) compared to ChatGPT (at a rate of 1.6%). In both cases, Product Hunt is causing a meaningful percent of visibility, but it is more pronounced in Google AI Overview. SuperWhisper's visibility in Google AI Overview is currently about 5.5%.

We shipped a meaningful change to our page around Jan 22 (see below). After this change, Superwhisper is mentioned in answers citing our page around 1.6% of the time.

Notably, our visibility is essentially only caused by one URL (a second URL is cited 0.1% of the time). In comparison, the other non-biased sources (reddit and youtube) have dozens of URLs contributing to visibility:

And, we appear to be contributing twice as much to Superwhisper's AI visibility than the best performing Reddit thread or Youtube link (not shown).

This signals that LLMs consider Product Hunt pages to have high signal, authenticity, and authority.

How to AEO

So what are the lessons we learned from optimizing Product Hunt pages for LLM visibility? We feel we've barely scratched the surface with this small, targeted experiment, but we've discovered that we seem to have the leverage needed to move the needle.

Page content

We have done a lot of experimentation on what we show on category pages.

Prior to revamping the AI Dictation Apps page, there were essentially no citations. (Unfortunately, our Gauge data doesn't go back far enough to see this cleanly.) Changing from the old version to the new "roundup" page created a baseline citation rate of around 0.4% across all models.

Recently, we’ve begun sourcing frequently asked questions from community content. When we shipped this, the ChatGPT citation rate 10x'd.

We initially attributed a citation jump to the FAQ addition. In retrospect, the timing aligns more closely with a ChatGPT model update (see below), making the causal link unclear.

However, other models did begin to cite the page more often. For instance, Google AI Overview citations roughly doubled after introducing the FAQ.

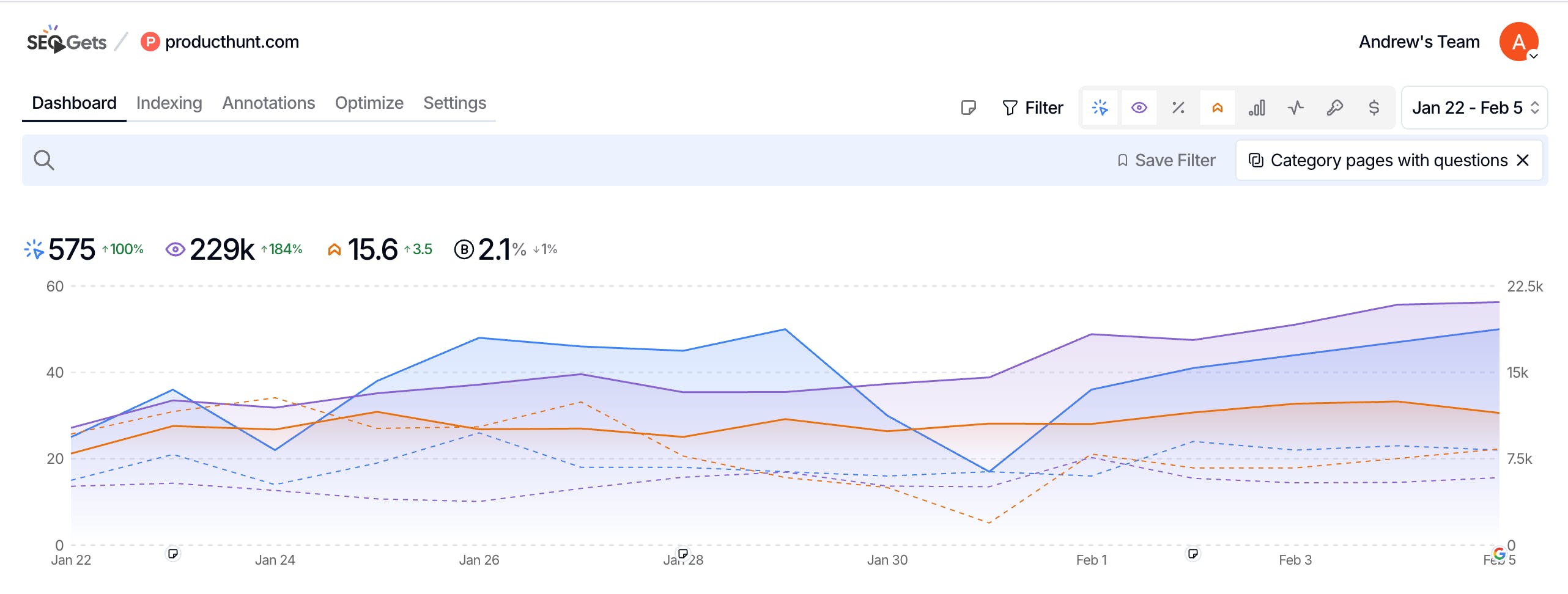

While the impact is much noisier, the same feature applied to other category pages increased search impressions by nearly 200% -- this is evidence that Q&A content will increase AI visibility in more cases that our dictation app category page.

SEO optimization

Under the hood, LLMs use web search to research on user’s behalf. LLM search queries are different from human, Google queries, but there are similarities. For AI dictation apps, we realized that most humans look for speech-to-text software not AI dictation apps. We changed the title of the category page from “The best AI dictation apps” to “The best AI dictation and speech-to-text software” (on January 7). This tripled our category page citation rate overnight.

So, SEO fundamentals are a key precursor to AI visibility. This should be a surprise to nobody, but this minor change is a great anecdote to highlight the importance of SEO fundamentals.

Hard won lessons

LLM games

ChatGPT and other AI bots can still be easily gamed. Old-timers will remember the days of keyword stuffing to game Google search results. We are in the early, easily-gamed phase of LLM search, similar to early SEO. A common tactic right now is mass-producing authoritative-sounding listicles where the publisher names their own product as “best” across multiple categories. LLMs scrape and confidently cite this content.

This dynamic rewards self-promotion over user signal. We believe that authentic, user content is the best way to inform product selection decisions. We also believe that it is in the best interest of OpenAI, Google, and Anthropic to address this gaming to serve their users.

AI bots receive frequent updates, and, for some of the updates, we see Product Hunt citation rate rise as some unbiased (Zapier, Reddit) or biased (Wisprflow.ai) sources fall, and other biased sources (speechify.com) jump significantly.

LLM differences

Somewhat unsurprising, there is a lot of variability between chat agents (ChatGPT vs Gemini vs Claude vs etc). And each agent implements web search differently. Our AI Dictation App category page is almost never cited by Microsoft Copilot, which uses Bing search for web search, or Perplexity, who we were unknowingly blocking due to a Cloudflare<>Perplexity feud.

Our opinion is to focus on the chatbots where your users actually search. Visibility is model-specific.

Where are we heading

We see all of the hard work that makers put into their launches. After launch, Product Hunt users leave genuine reviews and toss around ideas in discussions. This information is tremendously helpful for those doing product research on ChatGPT and other AI chatbots.

We view AI visibility as a new distribution layer. Our goal is to ensure that authentic community signal on Product Hunt is systematically surfaced in AI product research workflows.

Replies

Citation rate as KPI. They are treating AI assistants as distribution intermediaries, and they’re optimizing content structure, terminology, FAQ schema, and page authority to increase retrieval probability.

Hey Andrew, thanks for sharing this detailed post outlining how Product Hunt is optimizing for AEO.

The PH community has so many authentic and credible voices, unlike Reddit's anonymity. Happy to see the AEO experiment was a success!

I had noticed the category page structure changes a month ago or in December and had guessed it must be for LLM SEO.

Kudos on the great work! :)

Listnr AI

Another non-negotiable is having flawless technicals - JSON-LD, robots, FAQ schema etc. LLMs keep changing their source weightage, Reddit used to be the highest few months ago, now its Wikipedia - nothing beats having the best product that your users talk about on social :)

Product Hunt

@ananay_batra Cloudflare recently shipped markdown conversion, which we're looking into as a very scalable, low-effort way to improve technical LLM optimization.

We hope LLMs get smarter about their source weightage. As you can see in this post, they very frequently cite heavily biased articles, eg. `ProductX.com` concluding that "Product X is the best voice dictation software".

Raycast

@ananay_batra @andrew_g_stewart it would be awesome if I could just append `.md` to any Product Hunt slug and get the markdown version!

You guys should also implement Content Signals.

Product Hunt

Would love to hear thoughts from makers and the community on this post!

This is such an insightful read.

My key takeaways as a Product Hub owner:

You might want to list your product in as many product categories as possible (up to 3)

Ideally, you'd rank in the best products for each category

To rank higher, you'd need more followers, forum mentions, and founder reviews

Anything that I missed?

Also I found that by adding more product categories, Product Hunt might suggest less relevant alternatives.

@mikekerzhner @andrew_g_stewart As Product Hub owners, is there anything we could do to improve it?

Product Hunt

This is roughly right. We consider how well the product's launch did, but the driving factor is how many founder reviews the product has.

For the orbit awards, though, we are taking a more editorial stance, and applying our judgement. The orbit award winners are currently perma-featured on the category page, and will continue to receive visibility. We will re-evaluate the set of products periodically; if a standout product comes into play in the meantime, it would make sense to feature them on the category page!

ContentKing

Great work, team!

Siteline

Thanks for sharing, @andrew_g_stewart. I was also surprised not to see PH in the citations, considering that sites like G2 are featured quite often. I think that community-driven insights from Product Hunt are much more authentic and useful, so I hope the platform will get featured more and more.

How data-driven do you consider this approach? While reading your observations, I got a feeling that it's still room for assumptions, though, no doubt that the tool gives you direction. Most probably, because even large prompt sets still give you a simulated result (=assumption), not the real data.

Since LLMs not only use web search, but also using live agentic web browsing - an alternative approach to measurement could be to leverage server log data from your website. Incoming requests from bots and agents show what AI is looking at to train/index/refer, so you can understand which pages and parts of the website get overlooked. Kind of an additional data layer, to analyze together with prompt visibility metrics.

I'm now trying this mixed approach and there are already some low-hanging fruits: Cloudflare had been blocking AI bots for our site, so my optimization win was to just toggle this setting off. For AI Agent Analytics, I have been using Siteline which is launching on PH next week (shoutout to @davidkaufmann and @vzotov ). I have been their beta user for a while and provided some feedback from the Marketing standpoint. Would love if you have a look and join the conversation.

Really insightful breakdown on how structured content impacts AI retrieval. I’ve also noticed that inconsistent CSV exports from complex spreadsheets can affect how cleanly datasets are reused across AI workflows. I recently tested a formatting approach shared on https://www.wps.com that helped maintain structure during export without needing manual cleanup.

Product Hunt

@yulianazarenko

I think it's directionally correct, but not accurate. That's appropriate for measuring the success of an effort to increase AI visibility, but can't be used for any precise business modeling, for instance.

We're not aware of any technique that is significantly more accurate than generating a diverse set of AI prompts that are informed by actual search volume (eg. through Semrush). Of course, I'd expect something much more accurate one day -- will Google provide some sort of "sanitized" set of prompt-volume data, like they do in GSC for traditional search?

Some vendors use data sourced from sketchy chrome extensions that claim to track actual prompts. But, those prompts would be biased by the set of users who choose to install those sketchy chrome extensions.

Connecting/correlating prompt tracking to live agenting web browsing is smart! This is how we noticed that Perplexity was unknowingly blocked from our site due to bad blood between them and Cloudflare. Incorporating that into Siteline makes a lot of sense.

It would be really nice if someone could take connect agentic web browsing to Google Search Console impressions for long-tail "bot-like" search queries to try to reverse engineer live prompt volume. @davidkaufmann / @vzotov / @Gauge -- could you make that happen?

Siteline

@yulianazarenko @vzotov @andrew_g_stewart

Thanks for the shoutout Yulia!

Andrew, glad to year you see value in the agent visits data and great catch with Perplexity, these is exactly the type of insights we want to unlock for users.

Broadly agree here. We still see prompt tracking as valuable, but if you can use the agent visits (from user-initiated bots like ChatGPT-user) to make sure your prompt set is not only diverse, but also representative of what user are actually asking ChatGPT. For example, if you see more ChatGPT-user agent visits on pages / blog posts pertaining to a particular feature e.g. integration capabilities, then you know to include prompts on this topic in your set AND perhaps weight them more heavily when calculating your overall visibility. This approached combined with reliable search volume data (like you mention) I think can make the results a bit more robust.

Interesting! Right now, we treat visit volume (from user-initiated agents like ChatGPT-user) as a proxy for prompt volume, but it’s inherently imperfect: not all prompts trigger live browsing, many responses rely on cached or previously retrieved content, and obviously browsing doesn’t always reach your site versus competitors. We’re already integrating GSC / keyword volume data, so we’ll explore correlating that with agent visits as an additional directional signal to better estimate prompt demand.

Siteline

@vzotov @andrew_g_stewart @davidkaufmann

@Siteline is live today, would love to continue this conversation with the larger community 🚀

minimalist phone: creating folders

I am not surprised that this community is always ahead. :) So happy to be a part of it :)

I recently created a launch page but have been trying to figure out the most optimal approach to get the word out. From the terrible marketing I have deployed so far, it appears the product is liked, but I have not had much put into outreach. Thanks for the tips!